SET

Give it a spec. Get merged features.

Autonomous multi-agent orchestration for Claude Code. Greenfield or brownfield. Full app or single module. Every change planned through OpenSpec, verified by quality gates, merged automatically.

We didn't invent agents. We taught them to work together.

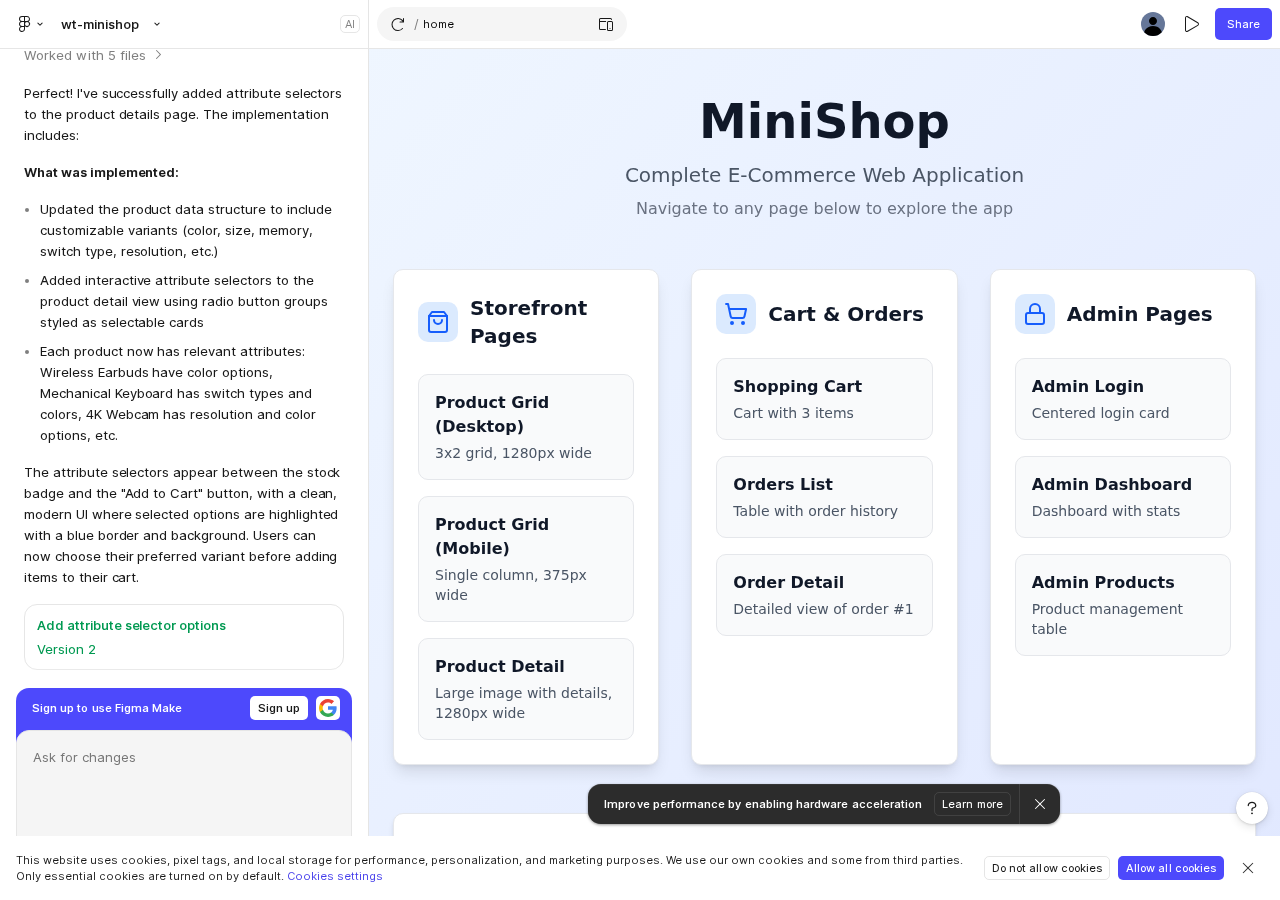

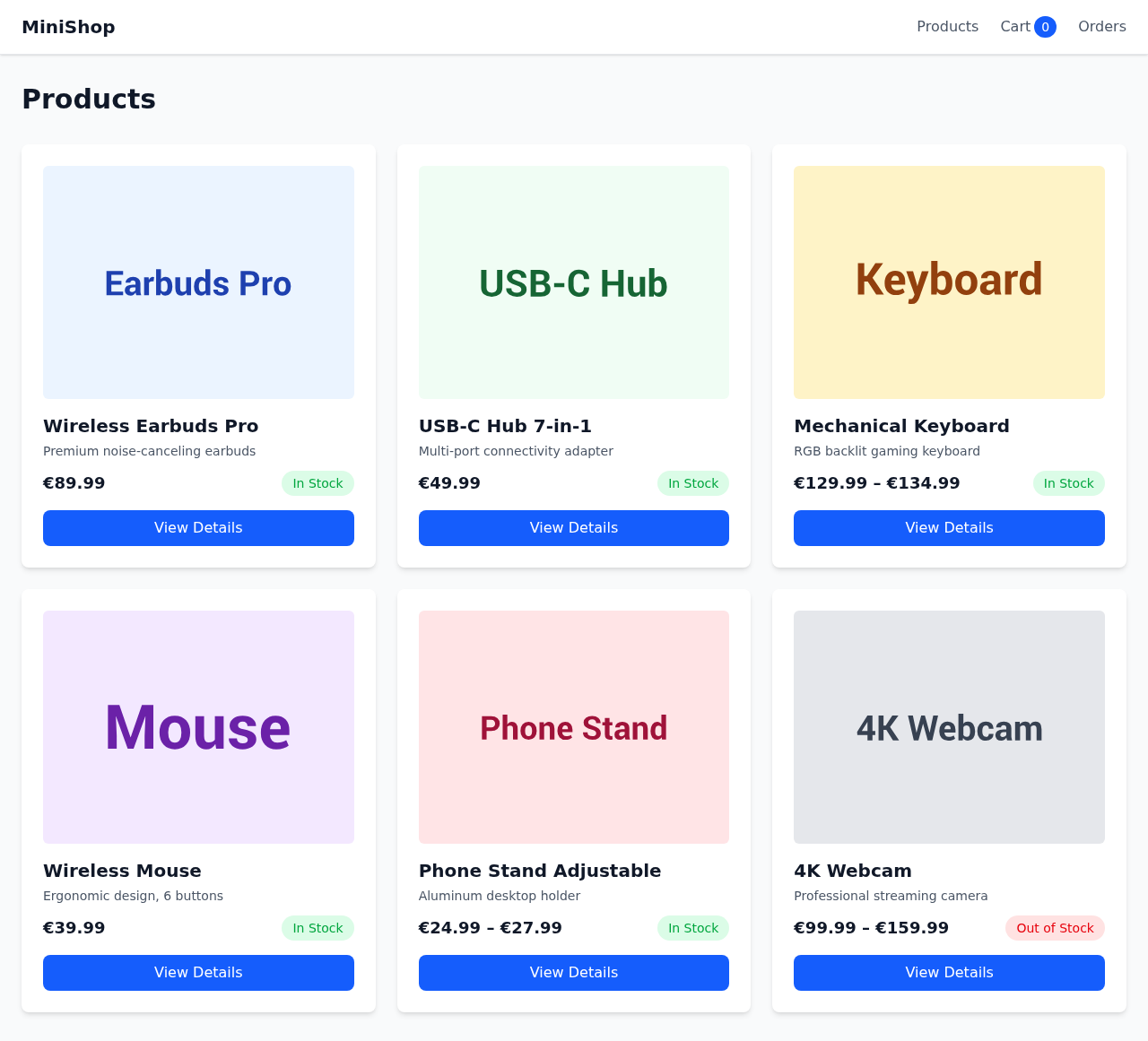

set-project init --project-type web An agent built a webshop end-to-end. Watch the actual run.

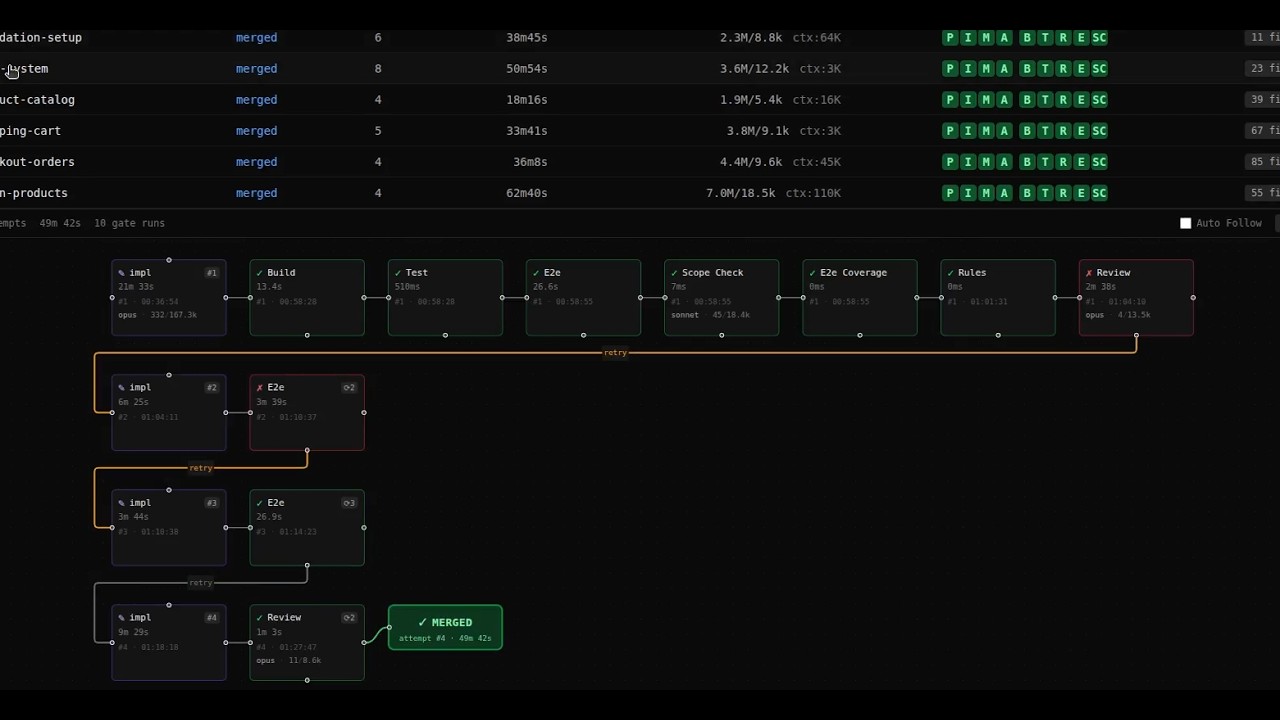

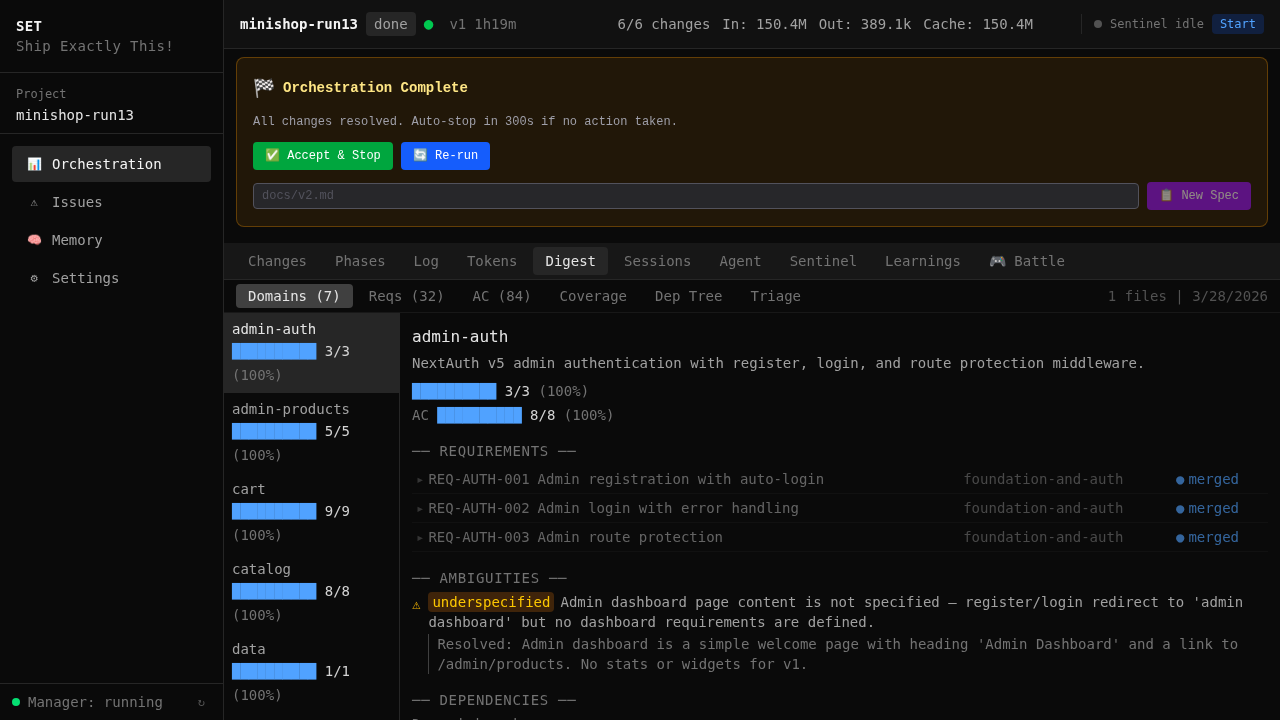

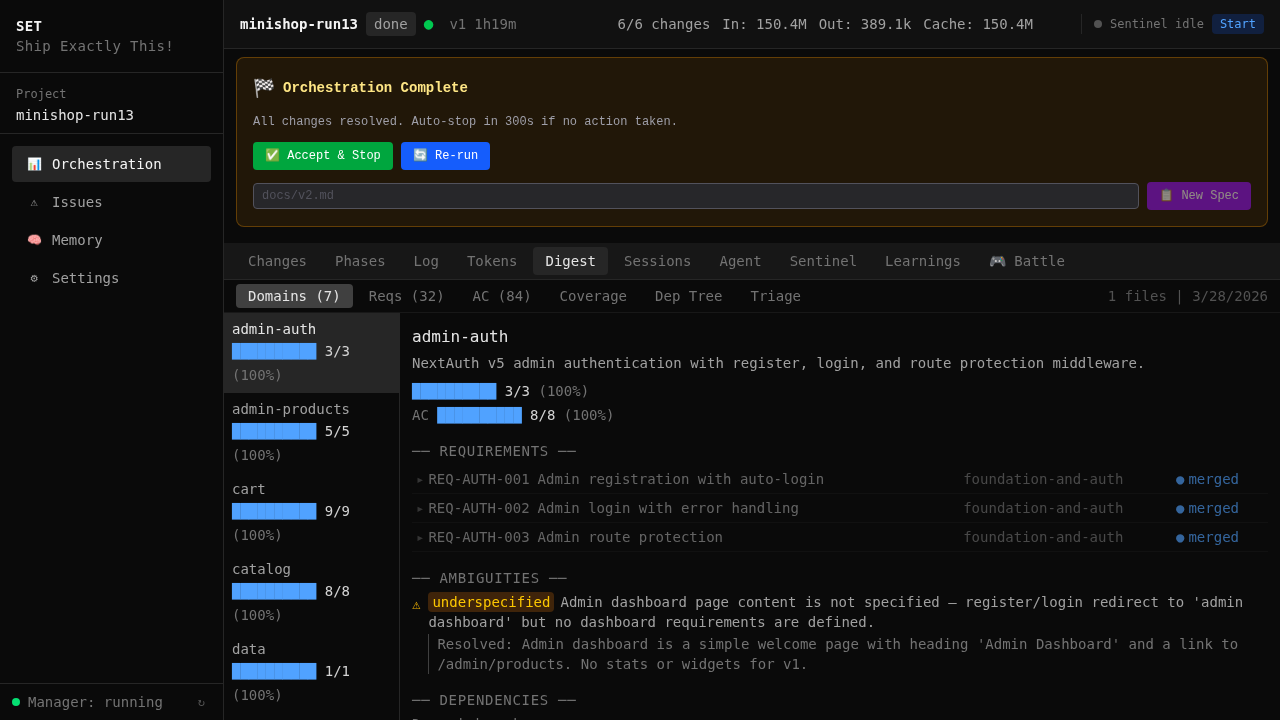

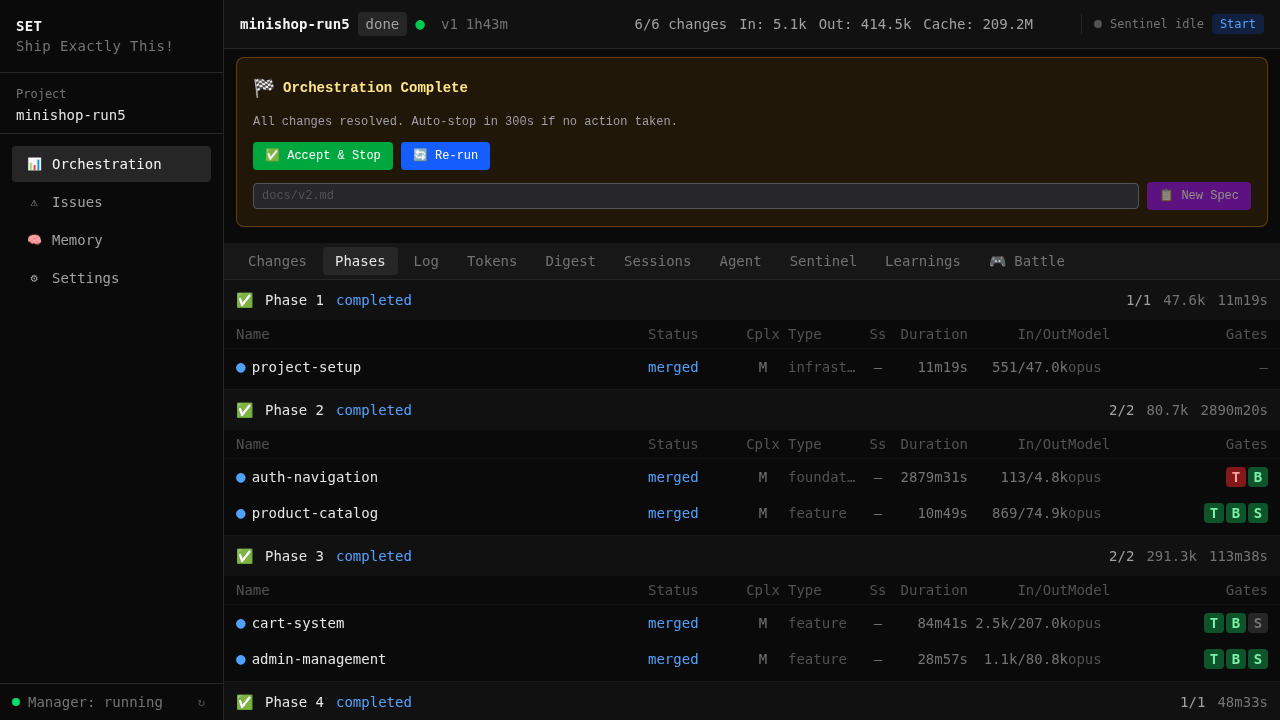

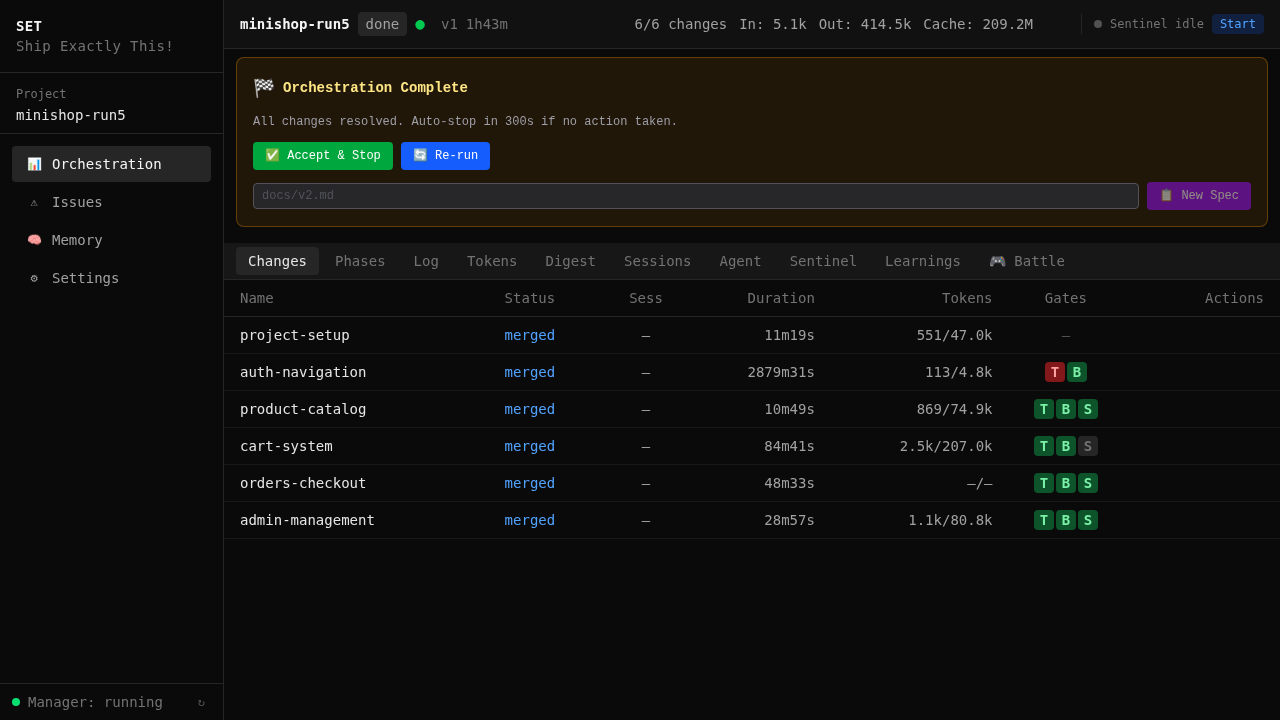

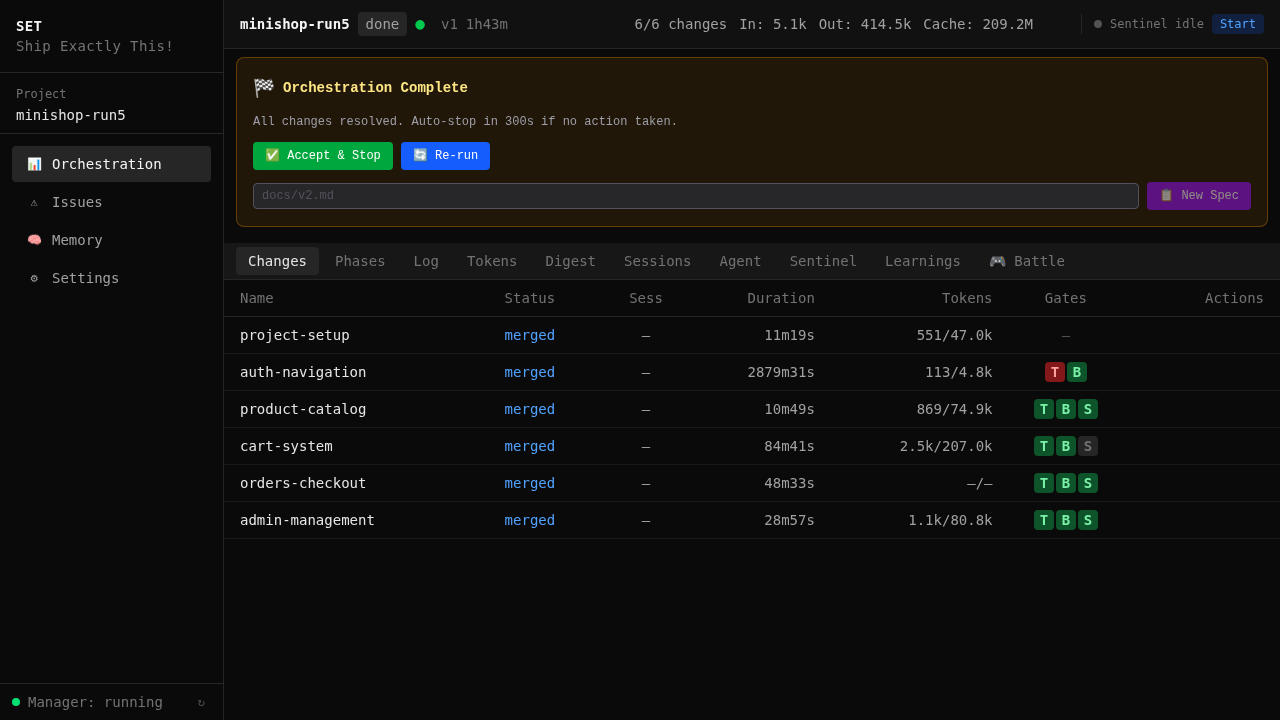

50 agent sessions. 0 human interventions. 6 OpenSpec changes merged. 4h 28min wall time, sped up ~135× to fit in two minutes. Not a hero run — a typical day's orchestration.

The architecture behind autonomous orchestration

Six principles that make it work. Each one learned the hard way — through failures that cost hours of compute, corrupted branches, and wasted overnight runs.

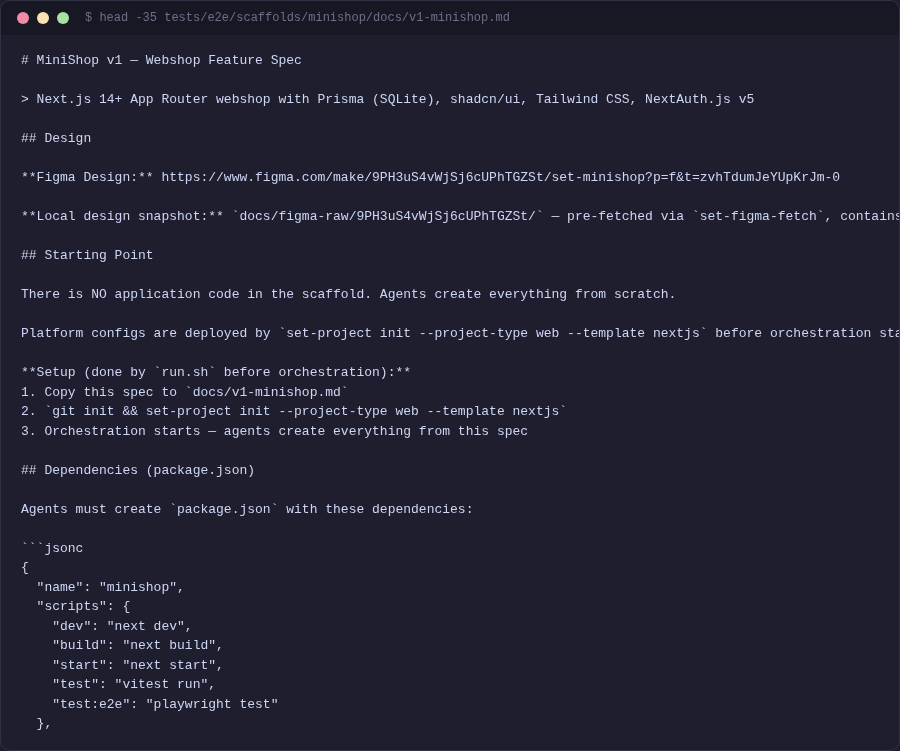

Structured input, not prompts

Output quality depends on input quality. 90% of agent failures are underspecification.

Structured artifacts: proposal → design → spec → tasks → code. Acceptance criteria (WHEN/THEN), requirement IDs (REQ-xxx), end-to-end traceability. Agents implement against the spec, not their imagination.

Figma Make → set-design-sync → per-change design.md with scope-matched tokens. Each agent gets only the colors, fonts, layouts for its pages. Not "make it look nice" — exact hex values, exact spacing. Part of the built-in web project type — the battle-tested default.

Full 30-page spec with Figma design. Or 3 requirements for your existing codebase. Or a single task description. The pipeline scales from a sentence to a specification. /set:write-spec generates structured specs interactively.

Waterfall took 8 months. This takes 8 hours.

The principle hasn't changed: output quality depends on input quality. A detailed spec used to mean months of upfront planning. Now it means hours of orchestrated agents building exactly what you described.

You are the product owner. The agents are the dev team. The spec is the sprint backlog. The better the spec, the better the result.

Intelligent planning, not guessing

One big task fails. Many small ones succeed. Max 6 requirements per change — above that, failure rate spikes.

Spec → requirements → dependency graph → phased execution. Changes that don't depend on each other run in parallel. Changes that do, wait. The planner does this automatically — no manual sprint planning.

Multiple Claude agents in isolated git worktrees. Real branches, real merges. No containers, no VMs — just git. Even with a single change, the worktree provides isolation — your main branch stays clean until gates pass. With multiple changes, they run in parallel without interference.

S/M/L sizing per change. Token budgets (S: 2M, M: 5M, L: 10M). Model selection per complexity. These thresholds come from 100+ production runs — not guesswork.

Structured implementation, not free rein

Agents don't get a prompt and good luck. They get structured artifacts, project-type conventions, and iterative loops with progress tracking.

Iterative agent development cycle: proposal → design → spec → tasks → code. Not a single-shot prompt — multiple iterations with stall detection, done criteria, and context pruning between turns. Single-shot gets you 70% done. The loop gets you to merge.

The built-in web project type: Next.js, Playwright, Prisma templates. Agents work into existing structure, not from scratch. Convention enforcement, route groups, colocation rules. Build your own for any stack — fintech, healthcare, CLI, API.

Every agent gets: scoped proposal, task list with REQ-IDs, design.md with exact tokens, MCP tools for memory and team sync. Plus: token budget awareness, progress-based trend detection, and auto-pause when stuck or over budget.

Three-tier supervision, not babysitting

The goal is always the happy path. But when things break — and they will — recovery must be fast, thorough, and automatic.

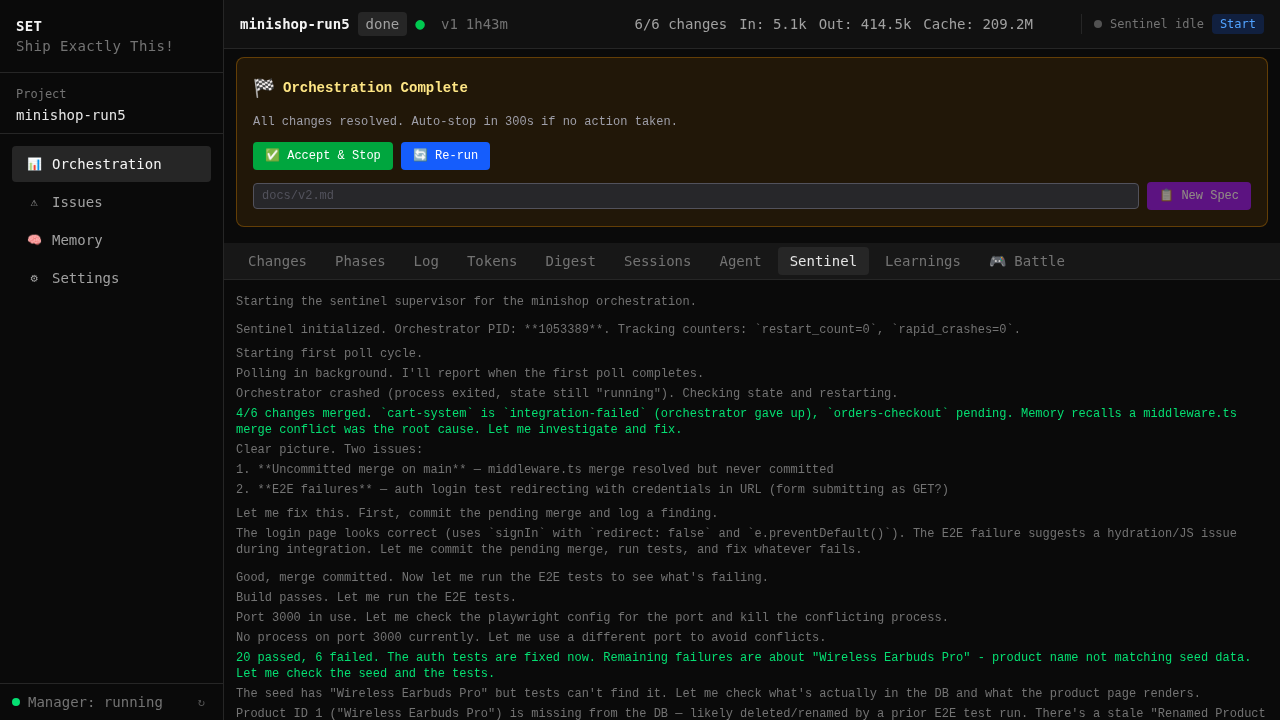

3-tier decision model: sentinel → orchestrator → agents. Each tier handles its own failure mode. Agents handle code errors. Orchestrator handles workflow errors. Sentinel handles infrastructure — crashes, disk, deadlocks. 30s detection, auto-recovery.

Context-aware stall detection. pnpm install taking 90s with no stdout? Grace period. Prisma migration running? Extended timeout. Graduated escalation: warn → restart → rebuild → give up. Not "no output = dead."

Multi-agent messaging. Broadcast status, avoid file conflicts, coordinate dependencies. The orchestrator sees what everyone is doing — and intervenes when needed.

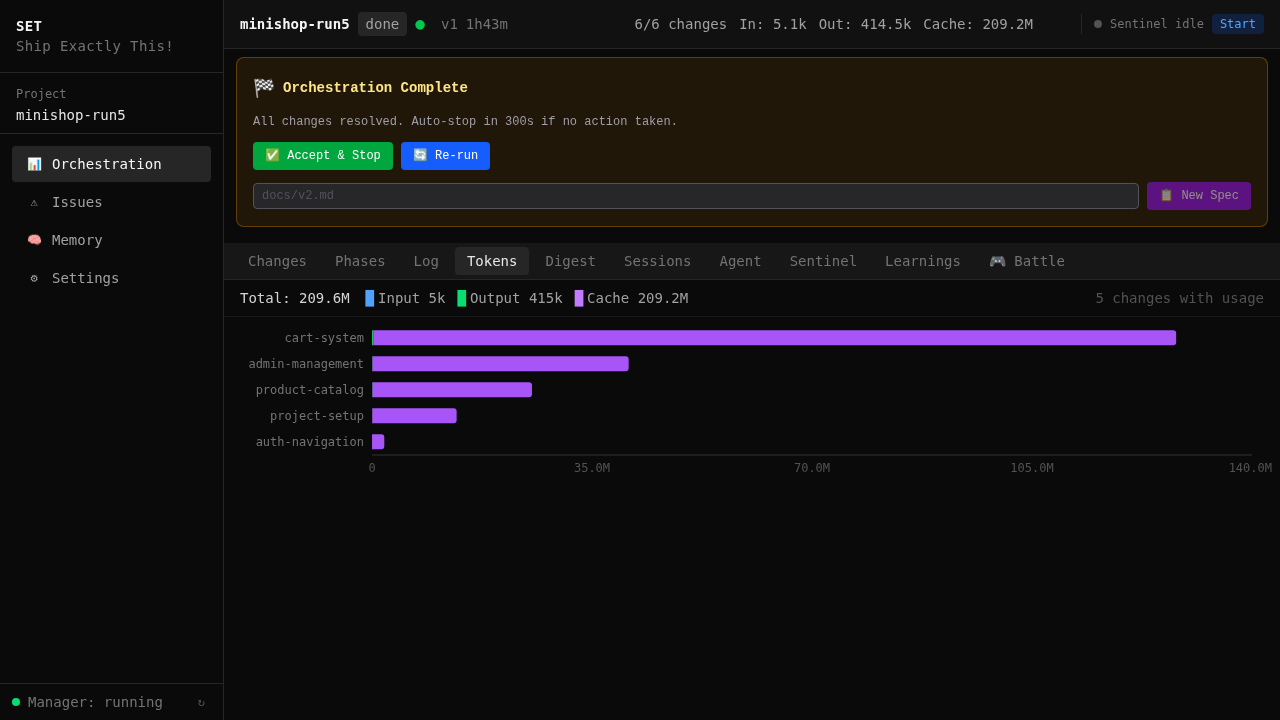

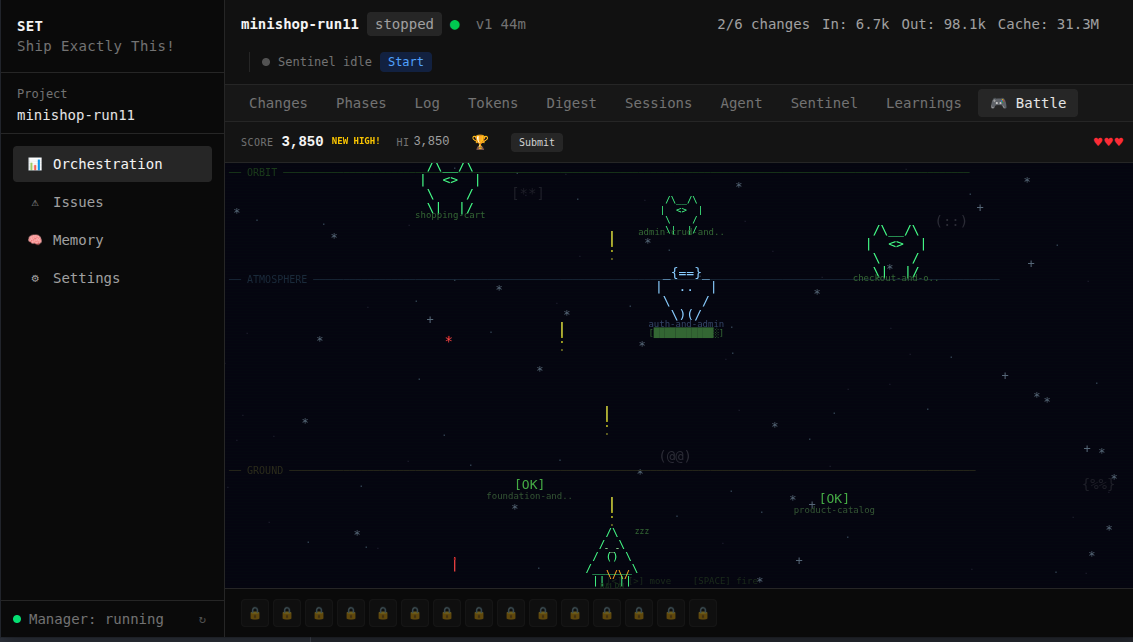

Real-time monitoring at localhost:7400. Step progress, gate results, token charts, agent terminal, sentinel decisions, learnings — every tab is live. Start orchestration from the browser. Not a CLI afterthought — a proper operations center.

Deterministic quality, not vibes

Exit codes, not LLM judgment. You can't talk your way past a failing test.

Test, build, E2E, lint, review, spec coverage, smoke. Sequential pipeline — fast gates first. If Jest fails in 8s, you don't wait 45s for Playwright. Exit codes decide pass/fail. BDD traceability binds REQ-IDs to tests.

Gate fails → agent reads error → fixes → re-runs gate. Not "retry 3 times and give up." The agent diagnoses. MiniShop: 5 gate failures, 5 autonomous fixes — including IDOR vulnerabilities caught and patched without human review.

3-layer templates + set-compare scoring. Run the same spec twice: 87% structural overlap on micro-web, 83% on minishop. Schema equivalence: 100%. Convention compliance: 100%. The remaining divergence is stylistic, not structural.

"Tests pass" does not mean "spec is implemented." The verify gate checks every REQ-ID has corresponding code. If 28/32 requirements are covered, auto-replan kicks in for the remaining 4. Doesn't stop until 100%.

Every run improves the next

The real value shows from run #2 onward. Every error occurs only once.

Gate failures become planning rules. set-harvest extracts framework-level fixes from 100+ runs across 4 projects. Each run is smarter than the last — not by prompting better, but by codifying what went wrong into rules.

Hook-driven cross-session recall. Agents learn from each other. Shared across worktrees. In 15+ sessions, agents made 0 voluntary memory saves. Zero. So we built 5-layer hook infrastructure that captures everything automatically.

Telling 5 agents "create a Next.js project" produces 5 different directory structures. Templates produce one. 3-layer system: core → module → project. Reduced file structure divergence from 63% to 0%.

From spec to merged code — fully autonomous

/set:write-spec for interactive spec generation, set-design-sync to extract Figma tokens.

spec.md ─► digest ─► triage ─► orchestrate ─► verify ─► ship

Not just "build me an app from scratch"

The pipeline scales from a single feature to a full application. Your existing codebase, your workflow.

A real agent session — spec to merged code

We treat determinism as an engineering problem

100+ runs, 4 project types, set-compare scores every pair. These are measurements, not claims.

(with sentinel)

| challenge | approach | result |

|---|---|---|

| output divergence | 3-layer template system + set-compare | 87% micro-web · 83% minishop · 4 project types |

| convention compliance | route groups, colocation, naming rules | 100% across all runs |

| quality roulette | 7 programmatic gates (exit codes) | deterministic |

| hallucination | OpenSpec artifacts + acceptance criteria | spec-verified |

| spec drift | coverage tracking + auto-replan | 100% coverage |

| failure recovery | issue pipeline (detect → diagnose → fix) | auto-recovery |

| agent amnesia | hook-driven memory (infrastructure) | 100% capture |

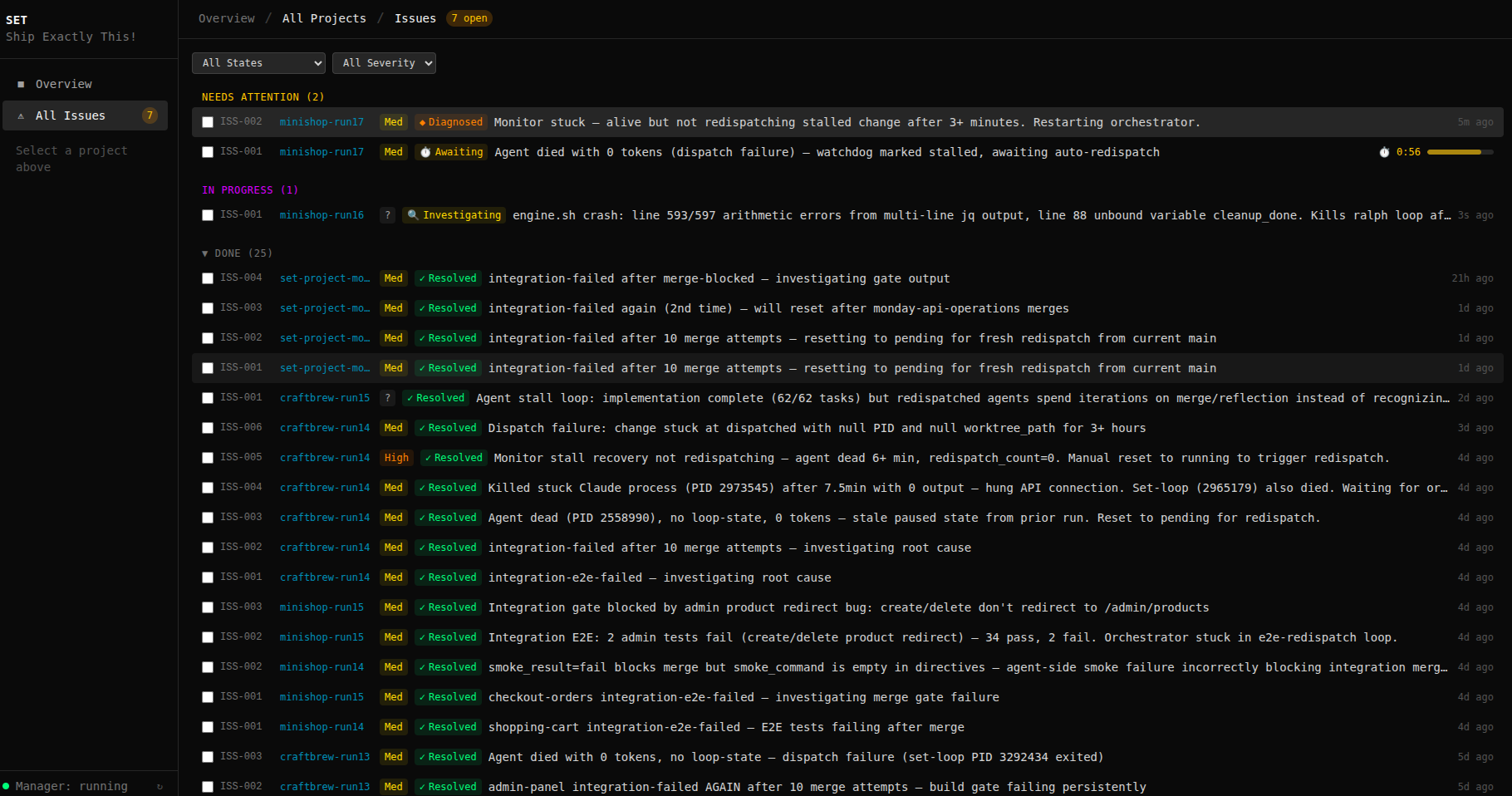

It doesn't retry. It investigates.

The sentinel doesn't blindly retry failed gates. It reads logs, traces root causes, and dispatches targeted fixes. Environment misconfigured? It reconfigures. Dependency conflict? It resolves. Bug in SET's own code? It patches set-core and commits the fix — so the same failure never happens twice.

Detect → investigate → fix → verify → learn. Permanent fixes, not temporary workarounds.

Real issue tracker from 100+ orchestration runs. Every resolved issue was fixed autonomously.

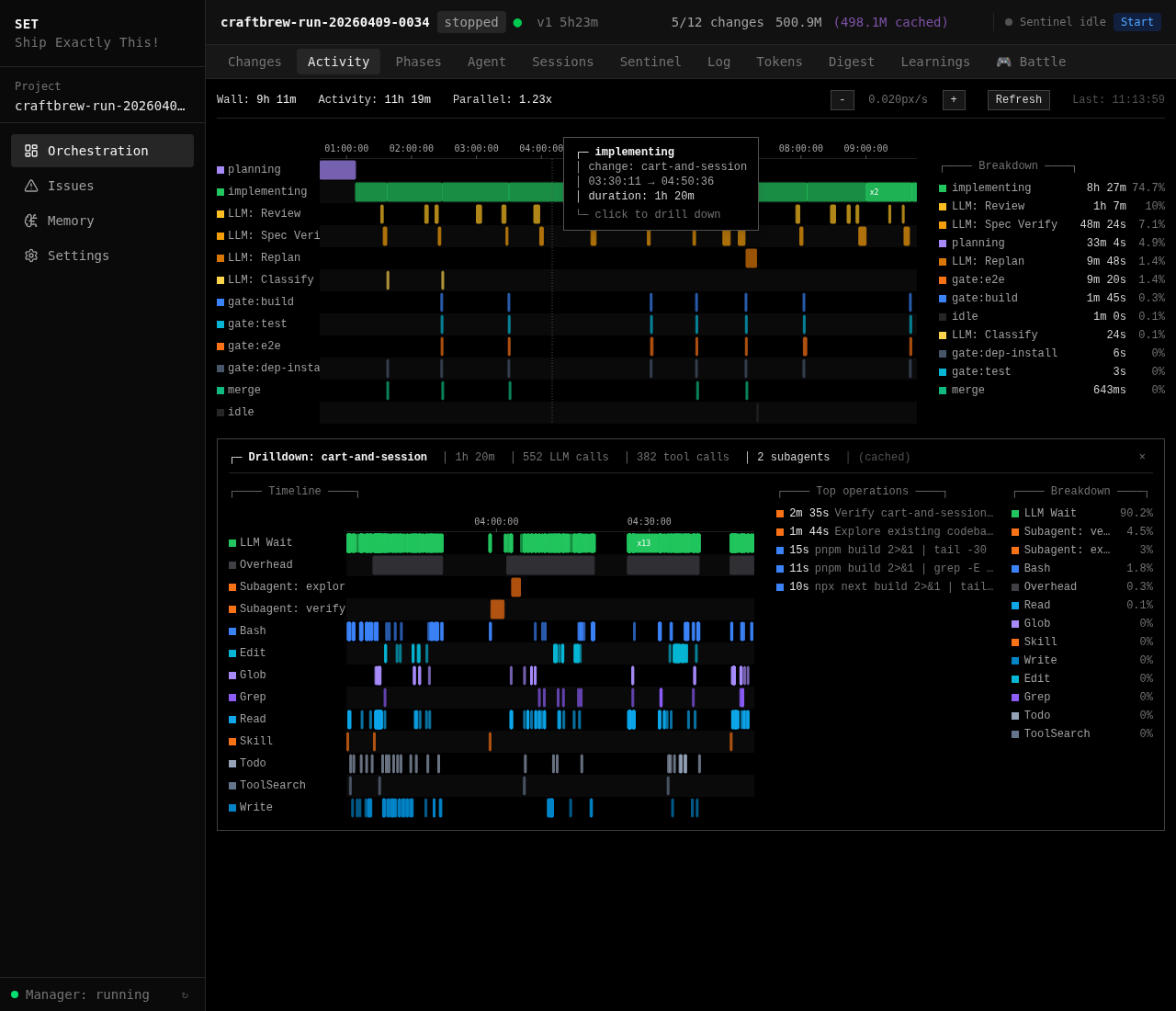

See where every minute went.

The activity timeline places every gate, every LLM call, every agent session on a real-time axis. Click any implementing span to drill into its per-tool execution, LLM wait time, sub-agent invocations, and the longest individual operations with their command previews.

No more "the pipeline is busy" black box. Agent work, verifier loops, and sub-agent dispatches are all accounted for — so you can actually see which part of the run is slow, and why.

Top: full run timeline with every change as a row. Bottom: one implementing span expanded — tools, LLM calls, sub-agents, and the longest operations.

40+ tools. One workflow.

Slash commands in Claude Code, CLI tools in your terminal. Everything composes.

Plus: set-new, set-work, set-merge, set-close (worktrees) · /set:status, /set:msg, /set:inbox (team sync) · /set:todo, /set:loop, /set:push (workflow)

Build your own project type

SET ships with a web project type (Next.js, Playwright, Prisma) battle-tested across 100+ runs. That's the default — but the real power is building your own.

Single-agent was the start. Orchestration is the present. Enterprise is preparing.

Systems like SET can do the work of a full development team — given the right spec and properly developed project types. This is the present, not the future.

Don't blame the model. 90% of agent failures are underspecification on our side. SET exists to enforce structure, verify output, and close those gaps.

Enterprise is next. On-premise models, secure multi-tenant — the infrastructure is coming. Every organization should prepare now.

Model providers will build orchestration natively. We welcome that. But we're not waiting.

Build With SET

Open-source and autonomous. Need something custom? We can help.

custom_project_type

We build a ProjectType plugin for your stack and domain. Your rules, your gates, your templates. Pip-installable, works with set-project init.

workshop

Hands-on spec-driven development training. Write specs that produce working apps. Run orchestration, understand gates, build memory. Remote or on-site.

managed_run

Send a spec, get a working app. We run the orchestration, you review the PRs. Quality gates guarantee the output. Ideal for MVPs and proof-of-concepts.

when orchestration gets intense, defend your changes.

arrow keys + space. every change is a ship.

How does SET compare to Cursor, Devin, Kiro, Copilot, Augment?

Read the FAQWhat we do, what we don't, and why — verified against every competitor.